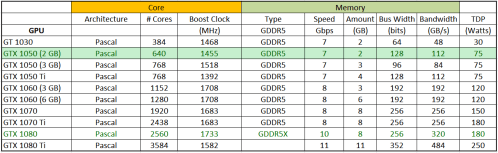

For today’s review, I’m taking a quick look at a little 75 watt graphics card from a few years ago: the Nvidia GTX 1050 2GB. As far as the now somewhat aged Pascal architecture goes, this one is pretty near the bottom of the pile in terms of performance. But, that means you can also get it at a decent discount. I got mine earlier this year for a mere $75 shipped (the card’s MSRP was about $110 back in October 2016).

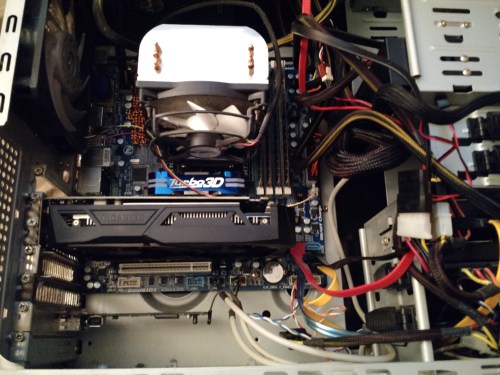

As you can see above, I picked up a beefy version…a big dual fan open cooler design by Gigabyte. This is massive overkill for a card with a meager 75 watt TDP (it doesn’t even have a connection for supplemental PCI Express power).

So, you might ask why I would bother reviewing a card of this type, given that all my testing to date has shown that the higher-end cards are also more efficient. Well, in this case I realized that summer in New England means I run a lot of air conditioning, and any extra wattage my computer uses is ultimately dumped into the house as heat. In the winter that’s a good thing, but in the summer it just makes air conditioners do more work removing that waste heat. By running a low-end card, I hoped to continue contributing to Folding@Home while minimizing the electric bill and environmental impact.

Testing was done in the usual manner, using my standard test rig (Windows 10 running the V7 Client) and measuring power at the wall with a watt meter. Normally this is the point where I put up a bunch of links to those previous articles, or sometimes describe in detail the methods and hardware used. But, today I’m feeling lazy so I’m skipping all that. The key is that I am as consistent as I can be (although now I am on Windows 10 due to Windows 7 approaching end of life). If you would like more info on how I run my tests, just go back a few posts to the 1080 and 1070 testing.

Results:

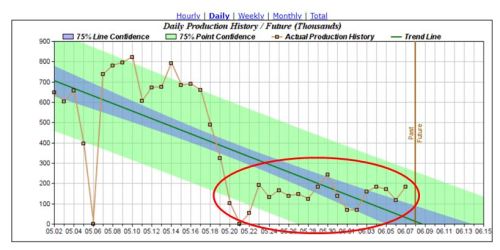

The circled region on the plot shows what happens in terms of Points Per Day when I “downgraded” from a 1080 to the 1050. The average PPD of about 150K pales in comparison to the 1080. However, when you think about the fact that this is similar performance to cards like the 300 Watt dual-GPU GTX 690 Ti from 2012 (a $1000 card even back then), things don’t seem so bad.

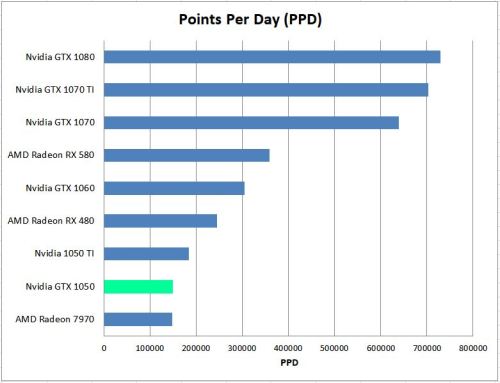

Here’s how the little vanilla 1050 stacks up against the other cards I have tested:

From a performance standpoint, this card isn’t going to win any races. However, as I mentioned above, it is actually pretty good compared to high-end old cards (the only one of which I have tested is the Radeon 7970, which cost $550 back in December 2011 and uses nearly three times the power as the 1050.

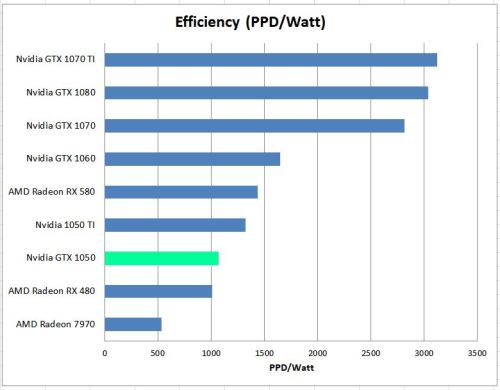

Efficiency is where things get interesting:

Here, you can see that the GTX 1050 has actually leapfrogged up the chart by one place, and is actually slightly more efficient than my copy of an AMD RX 480, which uses the 2016 Polaris architecture–something supposedly designed for efficiency according to AMD. Still, for about $10 more on eBay, you can get a used 1050 Ti, which shows a marked efficiency improvement as well as performance improvement vs the 1050. In my case, I found that both the GTX 1050 and GTX 1050 Ti drew the same amount of power at the wall (140 watts for the whole system). Thus, for my summertime folding, I would have been better off keeping the old GTX 1050 Ti since it does more work for exactly the same amount of wall power consumption.

Conclusion

Nvidia’s GTX 1050 is a small, cheap card that most people use for casual gaming. As a compute card for molecular dynamics, the best things about it is the price of acquiring one used ($75 or less as of 2019), the small size, and no need for an external PCI-Express power connector. Thus, people with low-end desktops and small form-factor PC’s could probably add one of these and without much trouble. I’m going to miss how easy this thing fits in my computer case…

At the end of the day, it was a slow card, making only 150K PPD. The efficiency wasn’t that good either. If you’re going to burn the electricity doing charitable science, it would be good to get more science per watt than the 1050 provides. Given the meager price difference in the used market of this card vs. it’s big Titanium edition brother, go with the Ti (exact same environmental impact but more performance), or better yet, a GTX 1060.

I would encourage you to grab and fold a 16xx card, as they are a step forward compare to the 10xx lineup.

I have a 1660 Super, and compare to the 1060 it’s 30% better on Hashcat with the same power draw from the wall.

I agree, and when I get back to the blog I do plan on testing some of the Turing cards. It seems like every new generation provides about 30% efficiency improvement over the last!