My normal posts on this site are all about how to do as much science as possible with Folding@Home, for the least amount of power. This is because I think disease research, while a noble and essential cause, shouldn’t be done without respecting the environment.

With that said, I think there is a use case for a power-hungry, inefficient Folding@Home computer. Namely, as a space heater for those in colder climates.

The logic is this: Running Folding@Home, or any other piece of software, makes your computer do work. Electricity flows through the circuits, flipping tiny silicon switches, and producing heat in the process. Ultimately all of the energy that flows into your computer comes back out as heat (well, a small amount comes out as light, or electromagnetic radiation, or noise, but all of those can and do get converted back into heat as they strike things in the room).

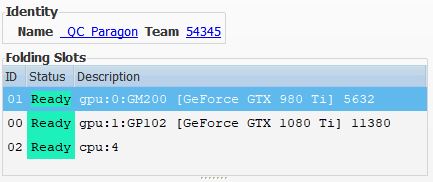

Have you ever noticed how running your gaming computer with the door to your room closed makes your feet nice and toasty in the winter? It’s the same idea. Here, one of my high-performance rigs (dual NVidia 980 Ti GPUs) is silently humming away, putting off about 500 watts of pleasant heat. My son is investigating:

My Folding@Home Space Heater Experiment

Folding@Home uses CPUs and GPUs to run molecular dynamic models to help research understand and fight diseases. You get the most points per day (PPD) by using cutting-edge hardware, but the Folding@Home Consortium and Stanford University openly encourage everyone to run the software on whatever they happen to have.

With this in mind, I started thinking about all the old hardware that is out there…CPUs and graphics cards that are destined for landfills because they are no longer fast enough to do any useful gaming or decode 4K video. People describe this type of hardware as “bricks” or “space heaters”–useful for nothing other than wasting power.

That gave me an idea…

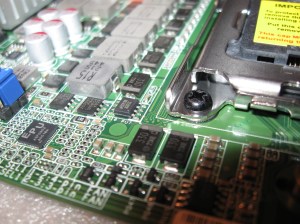

It didn’t take me long to find a sweet deal on an nForce 680i-based system on eBay for $60 shipped (EVGA board with Nvidia n680i chipset, supporting three full-length PCI-E X16 slots). I swapped out the Core 2 Duo that this machine came with for a Core 2 Quad, and purchased four Fermi-based Nvidia graphics cards, plus a used 1300 Watt Seasonic 80+ Gold power supply. All of this was amazingly cheap. The beautiful Antec case was worth the $60 cost of the parts that came with it alone. Because I knew lots of power would be critical here, I spent most of the money on a high-end power supply (also used on eBay). Later on, I found that I needed to also upgrade the cooling (read: cut a hole in the side panel and strap on some more fans).

- Antec Mid-Tower Case + Corsair 520 Watt PSU, EVGA 680i motherboard, Core 2 Duo CPU, 4 GB Ram, CD Drives, and 4 Fans = $60

- 2x EVGA Nvidia GeForce GTX 480 graphics cards: $40

- 1 x EVGA NVidia GeForce GTX 580 Graphics Card: $50

- 1 x EVGA NVidia GeForce GeForce GTX 460 Graphics Card: $20

- 1 x PCI-E X1 to X16 Riser: $10

- 1 x Core 2 Quad Q6600 CPU (Socket 775) – $6

- 1 x Seasonic 1300 Watt 80+ Gold Modular Power Supply: $90

- 2 x Noctua 120 MM fans + custom aluminum bracket (for modifying side panel): $60

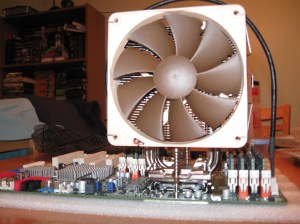

- 1 x Arctic Cooling Freezer Tower Cooler – $10

- 1 x Western Digital Black 640GB HDD – $10

Total Cost (Estimated): $356

This is the cost before I sold some of the parts I didn’t need (Core 2 Duo, Corsair PSU, etc).

Here is a shot of the final build. It took a bit of tweaking to get it to this point.

Used Parts Disclaimer!

Note that when dealing with used parts on eBay, it’s always good to do some basic service. For the GPUs in this build, I took them apart, cleaned them, applied fresh thermal paste (Arctic MX-4), and re-assembled. It was good that I did…these cards were pretty gross, and the decade-old thermal paste was dried on from years of use.

I mean, come on now, look at the dust cake on the second GTX 480! Clean your graphics cards, random eBay people!

Here’s how the 3 + 1 GPUs are set up. The two GTX 480s and the GTX 580 are on the mobo in the X16 slots. I remotely mounted the GTX 460 in the drive bay. I used blower-style (slot exhaust) cards on purpose here, because they exhaust 100% of the hot air outside the case. Open-fan style cards would have overheated instantly in this setup.

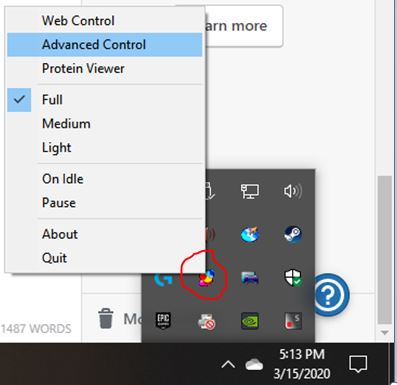

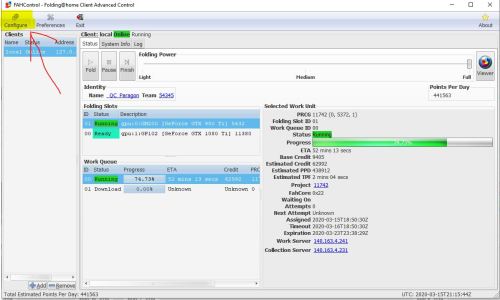

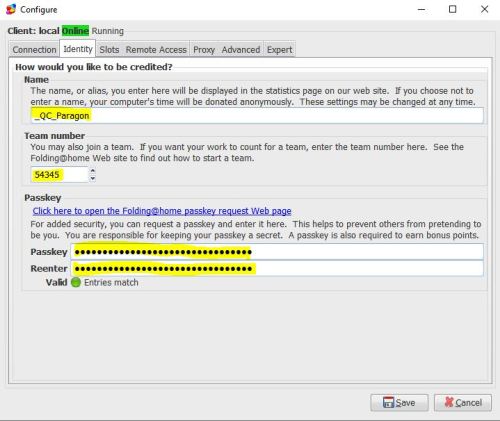

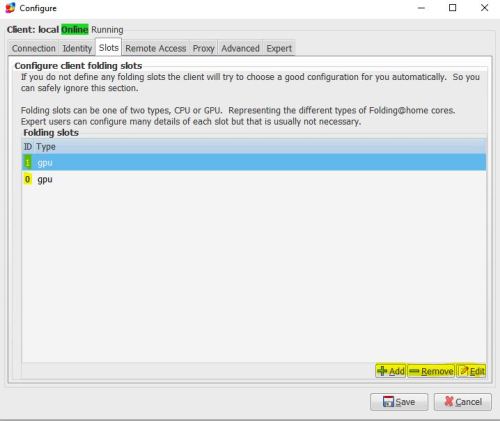

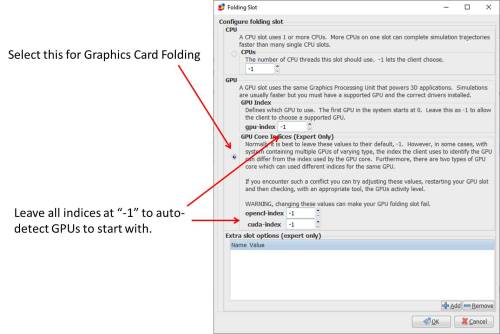

To keep costs down, I just used Ubuntu Linux as the operating system. I configured the machine for 4-slot GPU folding using proprietary Nvidia drivers. Although I ultimately control all of my remote Linux machines with TeamViewer, it is helpful to have a portable monitor and combo wireless keyboard/mouse for initial configuration and testing. In the shot below (of an earlier config), I learned a lot just trying the get the machine stable with 3 cards.

Initial Testing on the Space Heater (3 GPUs installed). This test showed me that I needed better CPU cooling (hence I chucked that stock Intel cooler)

I also did some thermal testing along the way to make sure things weren’t getting too hot. It turns out this testing was a bit misleading, because the system was running a lot cooler with the side panel off than with it on.

Some Thermal Camera Images During Initial Burn-In (3 GPUs, stock CPU cooler):

Now that’s some heat coming out of this beast! Thankfully, the upgraded 14-gauge power plug and my watt meter aren’t at risk of melting, although they are pretty warm.

Once I had the machine up and running with all four GPUs the final configuration, I found that it produced about 55-95K PPD on average (based on the work unit), with the following breakdown

- GTX 460: 10-20K PPD

- GTX 480: 20-30K PPD each

- GTX 580: 25-45 K PPD

Power consumption, as measured at the wall, ranged from 900 to 1000 watts with all 4 GPUs engaged. By turning different GPUs on and off, I could get varying levels of power (about 200 Watts idle. I typically ran it with one 580 and one 480 folding, for an average power consumption of about 600 watts).

After running the machine for a while, my room was nice and toasty, as expected!

One thing that I should mention was the effect of the two additional intake fans that I mounted in the side panel. Originally I did not have these, and the top graphics card in the stack was hitting 97 degrees C according to the onboard monitoring! After modding this custom side-intake into the case (found a nice fan bracket on Amazon, and put my dremel tool to good use), the temps went down quite a lot. I used fan grilles on the inside of the fans to keep internal cables out of them, and mesh filters on the outside to match the intake filters on the rest of the case.

The top card stays under 85 degrees C (with the fan at 50%). The middle card stays under 80 degrees C, and the bottom card runs at 60 degrees C. The GTX 460 mounted in the drive bay never goes over 60 degrees C, but it’s a less powerful card and is mounted on the other side of the case.

Here’s some more pictures of the modded side panel, along with a little cooling diagram I threw together:

PPD, Wattage, and Efficiency Comparison

I debated about putting these plots in here, because the point of this machine was not primarily to make points (pun intended), or to be efficient from a PPD/Watt perspective. The point of this machine was to replace the 1500 watt space heater I use in the winter to keep a room warm.

As you can see, the scientific production (PPD) on this machine, even with 4 GPUs, is not all that impressive in 2020, since the GPUs being used are ten years old. Similarly, the efficiency (PPD/Watt) is terrible. There’s no surprise there, since it averages just under 1000 watts of power consumption at the wall!

Conclusion

It is totally possible to build a (relatively) inexpensive desktop computer out of old, used parts to use as a space heater. If the primary goal is to make heat, then this might not be a bad idea (although at $350, it still costs way more than a $20 heater from Walmart). The obvious benefit is that this sort of space heater is actually doing something useful besides keeping you warm (in this case, helping scientists learn more about diseases thanks to Folding@Home).

Other benefits that I found were the remote control (TeamViewer), which lets me use my cellphone to turn GPUs on and off to vary the heat output. Also, I think running this machine for extended durations in its medium-high setting (700 watts or so) is much healthier for the electrical wiring in my house vs. the constant cycling on and off of a traditional 1500 watt space heater.

From an environmental standpoint, you can do much worse than using electric heat. In my case, electric space heaters make a lot of sense, especially at night. I can shut off the entire heating zone (my house only has two zones) to the upstairs and just keep the bedroom warm. This drastically reduces my fossil fuel usage (good old New England, where home heating oil is the primary method of keeping warm in the winter). Since my house has an 8.23 KW solar panel array on the roof, a lot of my electricity comes directly from the sun, making this electric heat solution even greener.

Parting Thoughts:

I would not recommend running a machine like this during the warmer months. If warm air is not wanted, all the waste heat from this machine will do nothing but rack up your power bill for relatively little science being done. If you want to run an efficient summer-time F@H rig that uses low power (so as to not fight your AC) , check out my article on the GTX 1660 and 1650.

In a future article, I plan to show how I actually saved on heating costs by running Folding@Home space heaters all last winter (with a total of seven Folding@Home desktops placed strategically throughout my house, so that I hardly had to burn any oil).