Hello again.

Today, I’ll be reviewing the AMD Radeon RX 580 graphics card in terms of its computational performance and power efficiency for Stanford University’s Folding@Home project. For those that don’t know, Folding@Home lets users donate their computer’s computations to support disease research. This consumes electrical power, and the point of my blog is to look at how much scientific work (Points Per Day or PPD) can be computed for the least amount of electrical power consumption. Why? Because in trying to save ourselves from things like cancer, we shouldn’t needlessly pollute the Earth. Also, electricity is expensive!

The Card

AMD released the RX 580 in April 2017 with an MSRP of $229. This is an updated card based on the Polaris architecture. I previously reviewed the RX 480 (also Polaris) here, for those interested. I picked up my MSI-flavored RX 580 in 2019 on eBay for about $120, which is a pretty nice depreciated value. Those who have been following along know that I prefer to buy used video cards that are 2-3 years old, because of the significant initial cost savings, and the fact that I can often sell them for the same as I paid after running Folding for a while.

I ran into an interesting problem installing this card, in that at 11 inches long, it was about a half inch too long for my old Raidmax Sagitta gaming case. The solution was to take the fan shroud off, since it was the part that was sticking out ever so slightly. This involved an annoying amount of disassembly, since the fans actually needed to be removed from the heat sink for the plastic shroud to come off. Reattaching the fans was a pain (you need a teeny screw driver that can fit between the fan blade gaps to get the screws by the hub).

RX 580 with Fan Shroud Removed. Look at those heat pipes! This card has a 185 Watt TDP (Board Power Rating).

RX 580 Installed (note the masking tape used to keep the little side LED light plate off of the fan)

Now That’s a Tight Fit (the PCI Express Power Plug on the video card is right up against the case’s hard drive bays)

The Test Setup

Testing was done on my rather aged, yet still able, AMD FX-based system using Stanford’s Folding@Home V7 client. Since this is an AMD graphics card, I made sure to switch the video card mode to “compute” within the driver panel. This optimizes things for Folding@home’s workload (as opposed to games).

Test Setup Specs

- Case: Raidmax Sagitta

- CPU: AMD FX-8320e

- Mainboard : Gigabyte GA-880GMA-USB3

- GPU: MSI Radeon RX 580 8GB

- Ram: 16 GB DDR3L (low voltage)

- Power Supply: Seasonic X-650 80+ Gold

- Drives: 1x SSD, 2 x 7200 RPM HDDs, Blu-Ray Burner

- Fans: 1x CPU, 2 x 120 mm intake, 1 x 120 mm exhaust, 1 x 80 mm exhaust

- OS: Win10 64 bit

- Video Card Driver Version: 19.10.1

Performance and Power

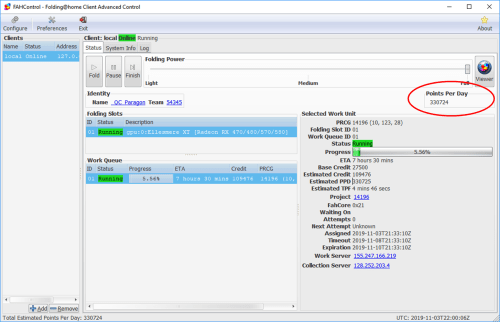

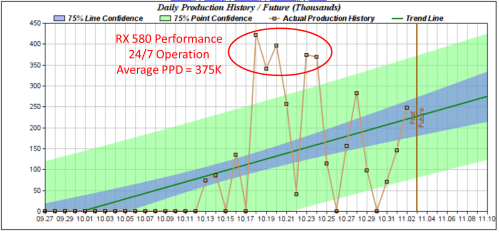

I ran the RX 580 through its paces for about a week in order to get a good feel for a variety of work units. In general, the card produced as high as 425,000 points per day (PPD), as reported by Stanford’s servers. The average was closer to 375K PPD, so I used that number as my final value for uninterrupted folding. Note that during my testing, I occasionally used the machine for other tasks, so you can see the drops in production on those days.

I measured total system power consumption at the wall using my P3 Watt Meter. The system averaged about 250 watts. That’s on the higher end of power consumption, but then again this is a big card.

Comparison Plots

Conclusion

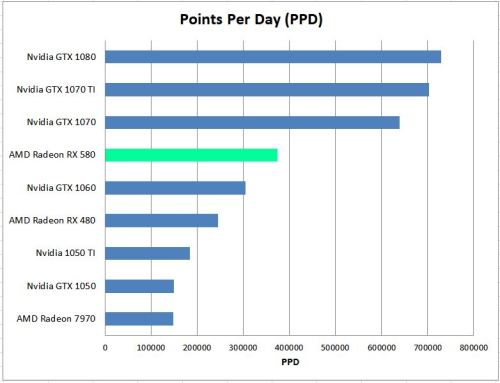

For $120 used on eBay, I was pretty happy with the RX 580’s performance. When it was released, it was directly competing with Nvidia’s GTX 1060. All the gaming reviews I read showed that Team Red was indeed able to beat Team Green, with the RX 580 scoring 5-10% faster than the 1060 in most games. The same is true for Folding@Home performance.

However, that is not the end of the story. Where the Nvidia GTX 1060 has a 120 Watt TDP (Thermal Dissipated Power), AMD’s RX 580 needs 185 Watts. It is a hungry card, and that shows up in the efficiency plots, which take the raw PPD (performance) and divide out the power consumption in watts I am measuring at the wall. Here, the RX 580 falls a bit short, although it is still a healthy improvement over the previous generation RX 480.

Thus, if you care about CO2 emissions and the cost of your folding habits on your wallet, I am forced to recommend the GTX 1060 over the RX 580, especially because you can get one used on eBay for about the same price. However, if you can get a good deal on an RX 580 (say, for $80 or less), it would be a good investment until more efficient cards show up on the used market.

I realize this was posted a while ago, but I just came across your site, which I love. I also picked up an rx580 on the cheap ($100 on ebay) and am using it mostly for folding. It’s true that at default settings, the rx580 is not efficient, but AMD was doing whatever they could to up the performance, efficiency be damned. On my card, I lowered the frequency from 1366 MHZ to 1250 MHZ, and the voltage from 1150mV to 950mV, and the card is stable and running at about 80 Watts. So much better efficiency is possible.

Wow that’s an awesome result! And it makes sense…typically gaming cards are competing for benchmark scores. I will try and do an article where I downvolt / underclock an AMD card in the future.

Still tuning my card, but I have some data on efficiency with the rx580. I’m running FAHBench 2.3.1 w/ OpenMM 7.4.1 in mixed precision mode, WU real (higher power than WU dhfr):

Std — Default out of the box setup, 1366MHz@1150mV

OC — Radeon Auto-Overclock feature, 1436MHz@unknown voltage

UV — Manual undervolting to 1250MHz@950mV

Setting GPU Watts Watts at the wall Relative performance

Std 106 218 —

OC 120 245 +3.8%

UV 75 168 -6.3%

A few notes:

— The GPU power is reported by the Radeon software, and I believe is just the chip, not the total board power.

— The undervolting is stable — I had even lower settings running in FAHBench that then threw exceptions when running actual work units.

— I’ve seen reviews where folks say their rx580 “sounds like a jet taking off”. Now I get it, that OC feature is LOUD.

Seems obvious, that at least for these AMD cards, there is tremendous room for efficiency improvements if you’re willing to get away from the default settings.

I got back into folding during the COVID-19 spike (although I jumped back in early enough for the beta). I normally only get 2 WU a day since I game for a few hours in the evening, but I am able to stably run with the following settings:

(Graphics mode, cause I never though to do compute mode)

Stage7 1340MHz@1000mV

Stage 6 1300MHz@975mV

301-330k per day

I’ve a set of settings that are stable in the FAHBench that are even lower, but those don’t seem to translate to stable real work units.

I’ll be checking compute mode out after my next WU ends.

Cool, welcome back! I wish I still had my RX 580 test card (sold it on eBay). I’d like to run some extended testing on compute vs. graphics mode, say 20 work units or so on each, to get an average answer on how much better compute mode is. Looking forward to seeing how compute mode works for you

Is it possible that switching from graphics to compute mode and back in the middle of a gpu job could mess things up? After doing that, I noticed that my RX 580 had missed timeout, was barely going to get to expiry in time, and had somehow acquired another job in its queue.

That’s not normal…I have switched the mode mid work unit without issue. Is it running more stable now that the more is switched? What kind of PPD is the client reporting?

Normally about 200k, now 114113; it is about half an hour away from finishing this job, the other is still in the queue. So it’s good to know that switching modes did not cause this to happen, whatever weird thing did happen. First timeout miss for this unit, I believe.

And it should be done by now, but it isn’t, & ppd is dropped to 68 for the gpu slot, so I’ve put it on finish and will reboot when it has finished or missed expiration, which it probably will now. Strange.

68k that is.

Huh, seems pretty low. Are you folding on the CPU as well by any chance?

Yes. the CPU (Ryzen 7 3700) is currently being rated at 150067 PPD, GPU at 119898. This is rather low compared to what it has sometimes been … the last time I ran the system overnight, the collective rating got to a bit over 700k, but it has seemed to fluctuate wildly, but until the last few days, c 500k folding c. 16hrs/day. I have been assuming that the PPD ratings were inherently rather inconsistent.

there’s about a 10-20% variation in PPD from work unit to work unit. One thing in your case that might be happening is a bit of a bottleneck. How many threads is the CPU client set to? On a 3700 (8 core, 16 thread CPU), it’s best to set the CPU client to something less than the max # of threads if you are also folding on the GPU. This allows the CPU to “feed” the GPU so that it doesn’t slow down / starve for work. In Windows, I usually go a bit extra and reserve one full core (i.e. 2 threads on a multi-threaded CPU) to feed the card. So for the 3700 that would be a CPU setting of 14 (leaving two threads free to feed the GPU). This might help if your current CPU slot is set to 16.

More info that you might not need:

One weird tidbit here…due to Folding@Home’s performance issues with CPU thread counts that are multiples of prime numbers, 14 (a multiple of 7) actually causes the client to run with 12 threads instead. Similarly, a CPU setting of 13 (a large prime) causes numerical problems, so the client down-selects to 12 threads yet again. So, you really only have three possible “high performance” CPU settings for a 16-thread chip. Namely, 16, 15, and 12. You can set the CPU slot to 14 or 13, but it will internally just use 12 anyway. You can experiment with this setting to see what gives you the max combined PPD between the CPU and the GPU. I’m guessing setting the CPU slot to 12 or 15 will be infinitely better than 16, because that way the CPU and GPU aren’t fighting for each other’s resources.

So my variation has been over a considerably wider range than usual. The aren’t any big changes in regime, mostly LaTeX and reading, folding paused for gaming for 1-2hrs most days.

Thread # was being ‘chosen by the client’. I’ve just set it to 15. The gpu also has a CPU thread setting, which I’ve left at -1 (they seem to use the same screen).

Another possibly related factoid is that on this system, after login, it’s not uncommon for core to crash a few times, no such problem on any of the other, considerably older ones I’m running.

Well that may have fixed the GPU’s problem … its PPD is now at 486584, CPU 155200.

That makes a lot more sense. The client picks thread counts for the CPU without regard to any other applications (including GPU folding slots) that may be running. Now you’ve got a dedicated thread free to keep the GPU happy.

Let’s hope it solves all the problems. The folding web page docco seems to me like a complete mess, like a barn loft with 15 years of manuals for different releases tossed on top of each other with no organization to sort out the different versions. Slot configuration is not the sort of thing that the sensible beginner would undertake given the warnings against doing it if you don’t know what you’re doing.

I wish I had found this page 3 months ago!

Same here, wish I would have found this page sooner. I started F@H when my workstation is idle, but will check if I can spare a core just for the GPU.

Since I dont need a graphics card just for F@H, would you be interested to test the performance of the low power P106-100, or even the P102-100 mining cards? They are factory tweaked for computing tasks but dont have the price tag of a graphics card. It seems the price is about 30$US for a P106 coming from China… It seems interesting for the performance. My workstation has 3 pcie 3.0 x16 slots, would use one for graphics and two of these P106-100 for F@H?