Welcome back. In the last article, I found that the GeForce GTX 1080 is an excellent graphics card for contributing to Stanford University’s charitable distributed computing project Folding@Home. For Part 2 of the review, I did some extended testing to determine the relationship between the card’s power target and Folding@Home performance & efficiency.

Setting the graphics card’s power target to something less than 100% essentially throttles the card back (lowers the core clock) to reduce power consumption and heat. Performance generally drops off, but computational efficiency (performance/watt of power used) can be a different story, especially for Folding@Home. If the amount of power consumed by the card drops off faster than the card’s performance (measured in Points Per Day for Folding@Home), then the performance can actually go up!

Test Methodology

The test computer and environment was the same as in Part 1. Power measurements were made at the wall with a P3 Kill A Watt meter, using the KWH function to track the total energy used by the computer and then dividing by the recorded uptime to get an average power over the test period. Folding@Home PPD Returns were taken from Stanford’s collection servers.

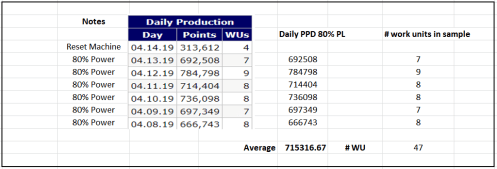

To gain useful statistics, I set the power limit on the graphics card driver via MSI Afterburner and let the card run for a week at each setting. Averaging the results over many days is needed to reduce the variability seen across work units. For example, I used an average of 47 work units to come up with the performance of 715K PPD for the 80% Power Limit case:

The only outliers I tossed was one day when my production was messed up by thunderstorms (unplug your computers if there is lighting!), plus one of the days at the 60% power setting, where for some reason the card did almost 900K PPD (probably got a string of high value work units). Other than that the data was not massaged.

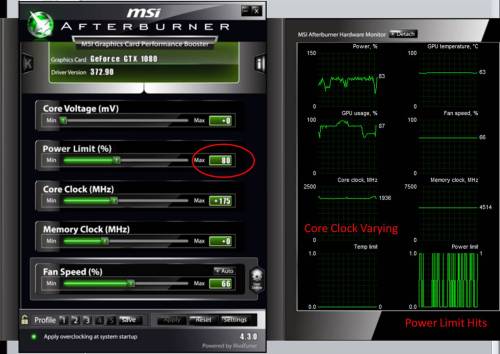

I tested the card at 100% power target, then at 80%, 70%, 60%, and 50% (90% did not result in any differences vs 100% because folding doesn’t max out the graphics card, so essentially it was folding at around 85% of the card’s power limit even when set to 90% or 100%).

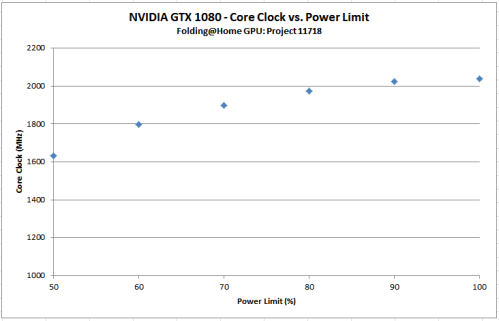

I left the core clock boost setting the same as my final test value from the first part of this review (+175 MHz). Note that this won’t force the card to run at a set faster speed…the power limit constantly being hit causes the core clock to drop. I had to reduce the power limit to 80% to start seeing an effect on the core clock. Further reductions in power limit show further reductions in clock rate, as expected. The approximate relationship between power limit and core clock was this:

Results

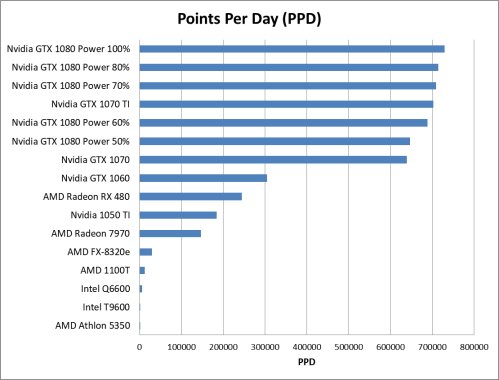

As expected, the card’s raw performance (measured in Points Per Day) drops off as the power target is lowered.

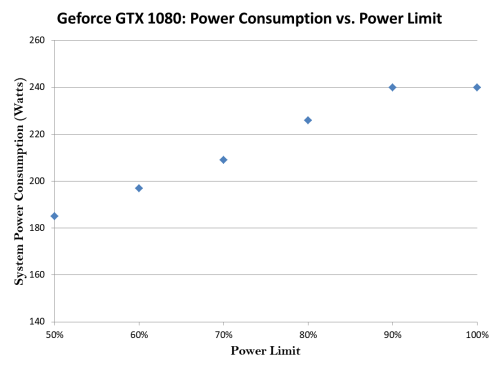

The system power consumption plot is also very interesting. As you can see, I’ve shaved a good amount of power draw off of this build by downclocking the card via the power limit.

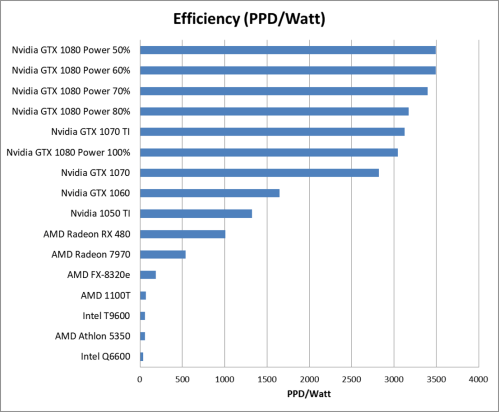

By far, the most interesting result is what happens to the efficiency. Basically, I found that efficiency increases (to a point) with decreasing power limit. I got the best system efficiency I’ve ever seen with this card set to 60% power limit (50% power limit essentially produced the same result).

Conclusion

For NVIDIA’s Geforce GTX 1080, decreasing a graphic’s card’s power limit can actually improve the efficiency of the card for doing computational computing in Folding@Home. This is similar to what I found when reviewing the 1060. My recommended setting for the 1080 is a power limit of 60%, because that provides a system efficiency of nearly 3500 PPD/Watt and maintains a raw performance of almost 700K PPD.

Keep up the great work Chris, I love this blog and am following it faithfully.

By the way, my PPD and PPD/watt numbers are considerably higher than you report for the 1060 6Gb. I get about 370k PPD, and I undervolt the GPU to 925mV using MSI afterburner.

I’m wondering if this discrepancy is due to the fact that I use the newest Nvidia drivers (which would explain the higher PPD), and the fact that I am undervolting it (which reduces its power draw considerably). It would be awesome if you did a post on undervolting, since I think it is a more promising way to improve efficiency than simply reducing the power limit–but that’s hearsay and I have not seen anyone compare the two methods back to back.

Best,

Jason

Thanks Jason. Good info on the 1060. I always wondered if I’d had a bit of a dud…it didn’t do as well as the community consensus would seem to indicate.

Regarding voltage tuning, I basically relied on the power limit to do that for me (it’s automatic). As the power limit is reduced, the card’s voltage is reduced (and thus the frequency). The card is following a pre-programmed voltage-frequency curve. The reduced voltage (due to the lower power limit) is what is causing the power reduction. The card is ensuring things remain stable by clocking down to match the reduced voltage per the curve. You can see the card’s voltage-frequency curve by hitting CTRL+F in MSI Afterburner (sorry if you know all this already).

Now I could go and mess with this curve…the idea I think you are suggesting would be to reduce the voltage a bit at the frequency the card is running at for folding and see if it is stable. If it is stable, then this was a perfect win (less power for the same performance). I was hesitant to try it because I like keeping a bit of a stability buffer there for Folding@home. In video games, a card might glitch, causing artifacts in the game, if the voltage is too low. In folding, it can cause errors in the science, which could cause a work unit to fail.

Now that I think about it though, folding does do error checking (that’s why work units fail, instead of reporting back full of errors). So a cool test would be to take a few percentage points of voltage out and see if it causes any work units to fail. If not…then yay! I’ll think about this for a future article.

Pingback: Folding@Home: Nvidia GTX 1080 Review Part 3: Memory Speed | Green Folding@Home

Hi thanks for postting this